CONVERGER #2 — YouTube’s Chilling Crackdown on AI Creators

Also: AI labs hypocritically condemn everyone else's internet scraping; viral internet creators dominate horror cinema; Noah Hawley is the franchise whisperer

Welcome to CONVERGER, a biweekly newsletter mapping the content singularity where AI and the internet collapse all media into one–a connective node where emerging technology, policy, culture, futures thinking and storytelling intersect.

Converger presents news and views from an AI, internet and media policy expert who is pro-innovation but anti-hype, allergic to both AI panic and AI boosterism, and passionate about supporting rather than supplanting human creativity with new technology.

Some issues may be heavier on media commentary, others on AI policy, others on personal passions like sci-fi’s influence on technology (both for good and bad) or the evolving medium and business of comic books in the digital age. You never know what threads might come together in convergence-space!

I’m Kevin Bankston, your host. I’m an AI and internet law and policy expert, media nerd, and occasional fiction writer who works at a DC tech policy think tank and teaches AI and copyright law at a local law school. You can watch me develop newsletter content in real-time on LinkedIn and the social network formerly known as Twitter, and less often on Bluesky and Instagram. You can also look for my more wonkish takes on AI governance at Elicitation, the new Substack from my AI policy day-job colleague Miranda Bogen of the Center for Democracy & Technology’s AI Governance Lab. (Note that my Substack articles don’t necessarily reflect CDT’s positions.)

This week’s edition is unexpectedly YouTube-heavy: the video platform is a key player in the first two features and gets a big mention in the third. This week’s edition is also just heavier, generally: although I characterized the previous 8000-word issue as a “super-sized first edition,” this second one is closer to 10,000 words. Turns out I have a lot to say about what’s happening in the world of AI and content convergence!

So, I may be shifting from biweekly to weekly sooner rather than later to spread my content out. But the very next edition likely will not be out for three weeks, as I’ll be taking a vacation in the interim. Hopefully this super-mega-sized edition will tide you over until then.

So let’s go! And please share with your friends and colleagues if you enjoy!

TABLE OF CONTENTS

FEATURES (>500 words)

“Deepfake” Detection Plus Demonetization Decimation Pose Double Threat to YouTube’s AI Creators (1527 words, 6 minute read)

The YouTube Generation Has Taken Over Horror Cinema (1546 words, 6 minute read)

Noah Hawley, the Multidimensional Franchise Expander (1545 words, 6 minute read)

Model Hypocrisy: Unconsented Data Scraping for Me But Not for Thee? (1435 words, 5.5 minute read)

A Hollywood AI Pipeline Built on Chinese Models? (537 words, 2 minute read)

How Not To Integrate AI Into Newsrooms: McClatchy’s AI-Driven Byline Blues and OpenAI’s Slop News Site (550 words, 2 minute read)

Hannah Einbinder Swirlie Watch: Who’s Getting Flushed for Using AI This Week? (595 words, 2.5 minute read)

FRAGMENTS (<500 words)

Creator, Trademark Thyself

ChatGPT Images 2.0 Can Now Fake Your Doctor’s Note

Affleck Tops List of Most Powerful AI Players in Hollywood

New Creative AI Integrations, Integrating AI Creatively

Because Parasocial Relationships with AI Aren’t Weird Enough

Because Parasocial Relationships with AI Aren’t Weird Enough, Part Two

The Other Ryan Gosling Astronaut Movie

Warner Bros. Shareholders Approve Paramount-Skydance Merger Bid

Window Treatment: (Almost) All Studios To Let Movies Stay in Theaters Longer

Proving (or Pretending) You Didn’t Use AI for Your Writing

The Actors Guild Deal Has…Some Sort of AI Protections?

AI Film Wins at a Traditional Film Festival for First Time; Academy Nixes Awards for AI Writing and Performances

44% Slop and Rising: AI-Generated Music on Deezer

FEATURES

“Deepfake” Detection Plus Demonetization Decimation Pose Double Threat to YouTube’s AI Creators

The same morning the first issue of Converger dropped, YouTube announced that it is opening its AI-powered deepfake detection system to all of Hollywood. The tool will let any actor, athlete, musician, or creator who worries their likeness might be co-opted upload it to YouTube, get flagged when potential replicas appear, and request takedowns. The system has been quietly available to top creators and a select group of politicians and public officials for months; now it’s going wide. The talent agents quoted in The Hollywood Reporter‘s exclusive coverage were practically giddy. Forgive me for not also applauding.

As YouTube’s Chief Business Officer admits in the story, this is basically like Content ID–YouTube’s tool for detecting copyrighted content–but for famous people’s faces. And as we all know, Content ID has never interfered with legitimate speech.

I very much want to know more details about this new initiative, but the story is light on specifics. How will the tool distinguish between AI-generated and real-life images of public figures (if at all)? How will YouTube apply carveouts for news, criticism, parody, and satire, types of speech for which public figures are particularly legitimate targets? Based on what right or policy would takedown requests related to abusive use of replicated faces even be based on? What counts as “abuse” or a “replica,” anyway? Only YouTube knows.

Frankly, I don’t have high hopes for this not causing a lot of collateral damage to legitimate expression on YouTube’s platform based on what I’ve seen all over Twitter the past few weeks: endless complaints from creators using AI in their otherwise human-created YouTube videos (and many who don’t), who are being demonetized by YouTube’s automated processes to detect “inauthentic content” that violates its policies. If the apparently unjustified breadth of this crackdown is any indication, I’d expect the special protections for celebrity faces to be applied in an equally imprecise manner.

I’ve yet to see anything from the mainstream tech or media press on this demonetization issue, but it’s been absolutely dominating my Twitter feed. A few examples:

Wow. So YouTube claims my content isn’t authentic despite the overwhelming majority of my videos being videos of myself on camera and my own music recorded live in a studio. @TeamYouTubeI’ve been a content creator with you for over a decade. What’s going on right now?—@ThePholosopherX

I run 2 YouTube channels (714K and 350K subs) with more than 400 million views on long-form videos. I create comedy podcasts and gaming content, and I do everything myself: filming, editing, and appearing on camera. Overnight, both channels were demonetized. I made 2 appeal videos—zero views. Every appeal was rejected with the exact same copy-paste automated message. No human support from YouTube. After 12 years and building two big communities, this treatment is unacceptable. –@DeliresdeMaxI’m a solo creator who runs the channel Sacred Stuff. It’s a cinematic storytelling channel where I post very low-volume, heavily detailed animated stories. I have over 80,000 subscribers and have only 4 videos. I was marked for inauthentic content. The policy specifies content that is mass-produced or repetitive. None of my stories are repetitive at all, and I only post once a MONTH, so they’re not mass-produced. Each video takes about 500 hours of human work. I walk through my entire process in this appeal video below[.] Can a human please review this?—@sacredstuf [UPDATE: it looks like this Twitter account has just been closed, and the creator has started a new account, @sacredstuffYT]

Seems there is a mass YouTube demonetization with channels being slapped as ’Inauthentic Content’. Affecting both AI & non AI creators. YT is not handling this AI wave well. I understand demonetizing those fully AI generated content farms that upload multiple times a day. But why take down animators, AI assisted storytellers & even some completely non AI channels? Is this another youtube apocalypse?—@AzeAlter

Self-proclaimed “viral AI storyteller” BLVCKL!GHT catalogued more affected creators:

This week, several of us were demonetized by @TeamYouTube for “inauthentic content.” None of us received an explanation.

Myself (https://youtube.com/@blvcklightai?si=_ONM9ogeiKqBNbwi) is a two-time Escape Awards-winning AI filmmaker whose work has been exhibited internationally and across multiple streaming platforms.

Aze Alter (https://youtube.com/@azealter?si=JIvdVFeVvI1G1oQO) is a writer and director behind some of the most recognized sci-fi worldbuilding series in the AI filmmaking space, with 230K+ YouTube subscribers.

Mart Zien (https://youtube.com/@azealter?si=JIvdVFeVvI1G1oQO) is a Cannes-credited film producer whose AI work has swept major festivals, been covered in Forbes, and been cited on the Masters of Scale podcast. [Note the correct name is Matt Zien, and the correct URL is https://www.youtube.com/@kngmkrlabs]

Javi Lopez (https://youtube.com/@javilopen?si=FU6UouE386mQAOOQ) is the founder of Magnific AI, a tool used in the VFX of a major Robert Zemeckis film starring Tom Hanks.

One search tells you exactly who we are and what we do.

So when @TeamYouTube and @YouTubeCreators label our work “inauthentic” with no definition, no breakdown, and no appeal path worth using, that’s not moderation. That’s a platform that has stopped being able to tell the difference between spam and craft.

Notably, although this demonetization occurs because these videos are nominally unsuitable for advertising against, that won’t actually stop YouTube from continuing to stick advertisements on them while not giving creators a cut.

It’s also ironic to have Google promoting its state-of-the-art Veo video generation model with one hand, and then punishing AI creators on its platform with the other, an inconsistency captured well by one affected Twitter user in graphic form:

Meanwhile, YouTube’s primary message–rather than actually giving clearer guidance on what will get you demonetized, or more specific explanations to the creators about why they were demonetized–has essentially been “if you disagree, change your content or file an appeal.” The same message was repeated in a more reassuring tone by the co-founder of AI studio Promise, who previously helped build YouTube’s monetization program:

We checked in with YouTube about the demonetization issues impacting the Gen AI creator community. They are continuing efforts to reduce repetitive, spammy content so more original, story-driven work can rise. The system isn’t perfect, so if you were flagged incorrectly, appeal. That feedback helps improve things over time. There’s a wave of bold, original work coming from AI creators right now, and we will continue to advocate for you.

And it does seem that the appeal process is working to some extent; a number of the louder voices that complained on Twitter, including BLVCKL!GHT, have now been reinstated. That’s both good and bad: good that many mistakes have been corrected; bad that so very many were made in the first place. Clearly, YouTube’s automated censors are not well-calibrated and these digital dragnets are catching a lot more than intended.

At least some of those creator-complainants were savvy enough to specifically call out the European Union’s Digital Services Act in their appeals. That law requires a specific explanation of demonetization or deplatforming decisions by user-generated content platforms, in addition to requiring an appeals process. I’ve now seen a lot of people reposting their notices from YouTube and they certainly don’t feel very specific–just restatements of the very vague, broad, subjective provision in the terms of service against “inauthentic content,” which originated from an expansion of the “repetitious content” policy in July 2025:

Inauthentic content refers to mass-produced or repetitive content. This includes content that looks like it’s made with a template with little to no variation across videos, or content that’s easily replicable at scale…. Examples of what’s not allowed to monetize (this list is not exhaustive):

Content that exclusively features readings of other materials you did not originally create, like text from websites or news feeds

Songs modified to change the pitch or speed, but are otherwise identical to the original song

Similar repetitive content with low educational value, commentary, narratives, or minimal variation across videos

Mass-produced content using a similar template across multiple videos

Image slideshows or scrolling text with minimal or no narrative, commentary, or educational value

To the extent I understand what the above includes, it seems that many of the folks complaining don’t fit the bill–and apparently YouTube (eventually) agrees, considering many of the reinstatements.

YouTube certainly has a right to make choices about what’s on its platform, and there is indeed a lot of AI (and non-AI) engagement-farming garbage that it can and should work to get rid of. But right now it’s clearly getting the balance wrong–either in terms of how it has written its “inauthentic content” policy, how it is interpreting it, how it is automatically applying it, how it is explaining those decisions, how it is handling appeals to those decisions, or all of the above.

YouTube needs to take a beat to pause this decimating wave of demonetization, take another look at its DSA obligations and the Santa Clara Principles on Transparency and Accountability in Content Moderation that helped inspire them, and go back to the drawing board. It should be equally cautious and much more forthcoming about its deepfake detection initiative, as well. Otherwise YouTube risks stifling the very same AI-driven creativity they are promising to enable with Google’s own AI models, and pushing the next generation of tech-enabled talent to use another platform.

The YouTube Generation Has Taken Over Horror Cinema

The poorly-targeted wave of demonetization currently hitting YouTube video creators takes on additional resonance when you look at how important that platform has been to cultivating the film talent of the future. In our last issue, I highlighted the upcoming feature film Backrooms as the latest product of the YouTube-creator-to-Hollywood-director pipeline. Several items came across my TL since then to highlight that not only is that pipeline flowing, it’s near bursting with fresh talent. But for how long?

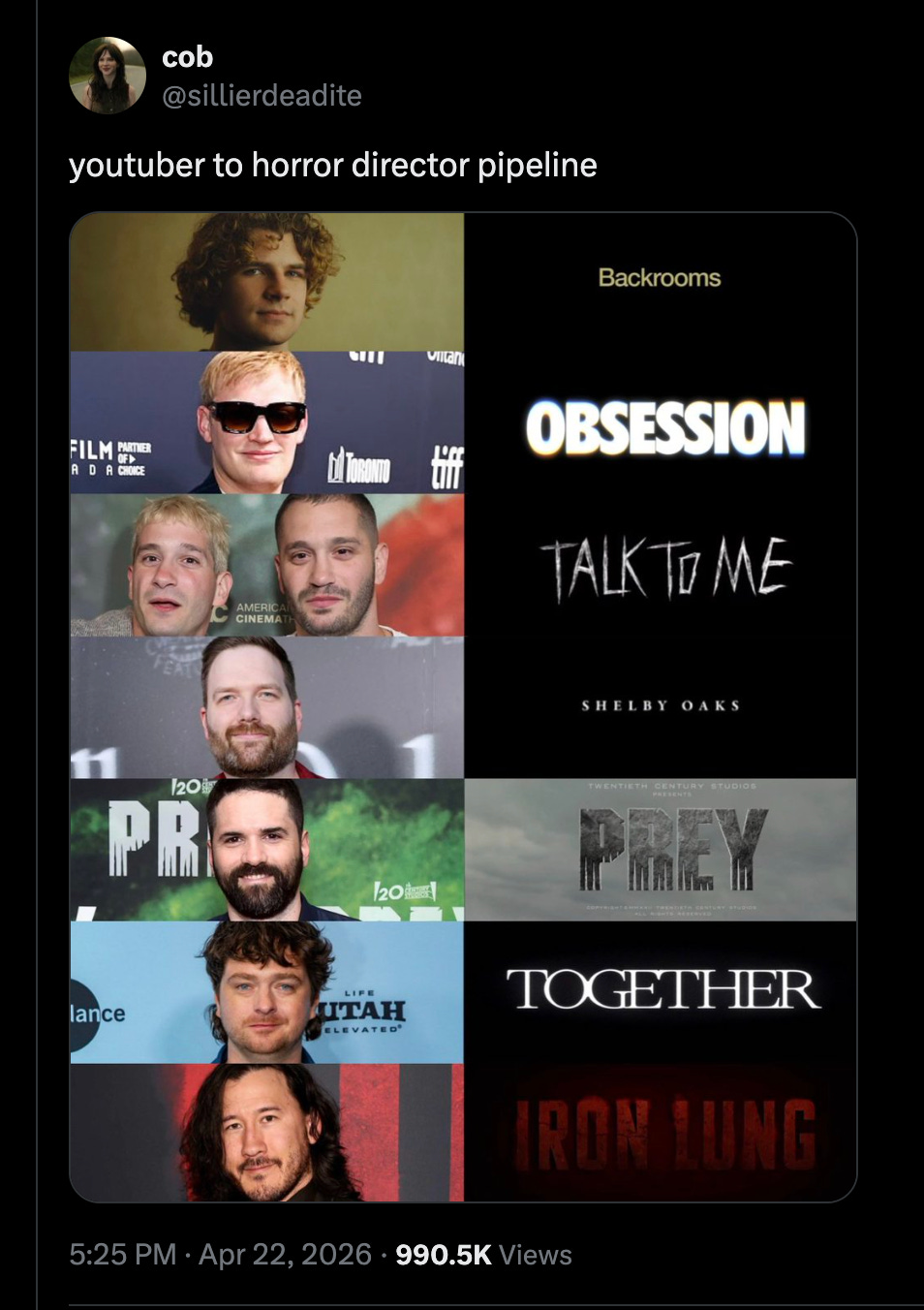

The first item was this meme-chart, highlighting a huge roster of feature horror directors with YouTube roots:

The marquee example is Kane Parsons (AKA Kane Pixels), director of Backrooms, whose story is illustrative of this internet trend not just because he’s a YouTube creator but one who made his bones expanding on creepypasta internet lore and relied on design software to render it.

The concept of the Backrooms grew out of an unattributed photo and text snippet on 4chan and blossomed into an entire subculture of creators telling stories based in a horrific and endless liminal space of empty, sickly-yellow office hallways, an other-dimensional labyrinth pulsing with mundane dread.

Parsons became the preeminent chronicler of the Backrooms. His debut video in January 2022 was made by the sixteen year-old Parsons in a month using open source 3D modeling software Blender and Adobe After Effects, and it and its over twenty sequels have collectively been viewed nearly two hundred million times. And now, at twenty years old, he’s the youngest director of any feature film released by A24, Hollywood’s preeminent purveyor of elevated horror films. (And, on a tech side-note, he used Blender extensively for the feature film too.)

Next up in the chart is Curry Barker, whose debut feature–love spell gone wrong horror flick Obsession–is coming to theaters on May 15. Until last week he was the second-biggest name-to-watch on this list, but today he is arguably the most. Barker came up through That’s a Bad Idea, the YouTube comedy sketch channel he runs with Cooper Tomlinson (favorably compared to I Think You Should Leave), then dropped the $800 viral horror short Milk & Serial on YouTube in 2024. A few days ago he wrapped principal photography on his next horror pic for Focus, Anything But Ghosts, produced by Blumhouse-Atomic Monster and Spooky Pictures, and he just signed on to direct A24’s Texas Chainsaw Massacre reimagining also produced by Spooky Pictures. Three features in the pipeline and the keys to a major IP, all before his theatrical debut.

The remaining examples are equally impressive:

Danny & Michael Philippou: Creators of RackaRacka (6.9M subs), an Australian comedy channel built on hyper-violent practical-effects sketches, the Philippou brothers directed 2023’s Talk to Me and their 2025 follow-up Bring Her Back, both for A24.

Chris Stuckmann: Stuckmann spent over fifteen years as one of YouTube’s most respected film critics (2M subs), then jumped from reviewing horror to making it. The Kickstarter raised $1.4M. Mike Flanagan came on as EP. Neon distributed.

Dan Trachtenberg: The granddaddy of this list. His 2011 live-action Portal: No Escape fan short, made on a shoestring and posted to YouTube, got him 10 Cloverfield Lane, and that got him Prey and now Predator: Badlands. He’s proof that this pattern long predates the current wave.

Michael Shanks: timtimfed, Shanks’ Australian YouTube comedy channel of surreal genre shorts, ran for years before his debut body-horror feature Together, produced by its stars Alison Brie and Dave Franco, broke at Sundance and grossed $32M worldwide last year.

Mark “Markiplier” Fischbach: 38M-sub gaming YouTuber Fischbach first played Iron Lung, the David Szymanski indie sci-fi horror game, on his stream in May 2022. He then optioned and self-financed the film adaptation, clearing over $50M worldwide earlier this year on a $3M budget and without traditional studio backing.

An illustrious roster, but what’s most notable about this meme is that based on news from the past few weeks it is already out of date, since there are at least four more creators of viral internet media with recent news about their burgeoning directorial careers:

Sam Evenson: Evenson is a VFX artist (Dune: Part Two, The Last of Us) who runs the YouTube channel Grimoire Horror. His viral 12-minute YouTube short Mora, about a haunted image generation AI model, sold to Neon two weeks ago, with Evenson writing and directing the feature. Like Barker, his production is backed by Spooky Pictures’ Steven Schneider and Roy Lee. Notably, Lee also was one of the producers who launched the feature career of Zach Cregger (Weapons, the upcoming Resident Evil reboot) with his breakout debut Barbarian. (Like horror director-producer Jordan Peele and many others on this list, Cregger started in comedy before transitioning to horror, as a writer-performer in the sketch group The Whitest Kids U’Know.)

Ian Tuason: Tuason has the weakest YouTube connection on this list, but Erik Davis of Rotten Tomatoes and Fandango grouped him with the others in a recent tweet and he’s not wrong: “It’s interesting that A24 is not-so-quietly recruiting filmmakers like Kane Parsons, Curry Barker, the Philippou brothers, Ian Tuason—all guys who created viral content prior to their big-screen debuts.” In this case, Tuason made indie shorts through the 2010s; his 2025 horror feature Undertone started life as a radio play, was shot for $500K in his childhood home, won the Fantasia audience award, sold to A24 in a mid-seven-figure bidding war, and pulled a $9.3M domestic opening weekend. A month ago he confirmed in an interview with Phantasmag that A24 will be producing an Undertone prequel, and a third entry is being discussed. He is also lined up to direct Paranormal Activity 8 for Blumhouse.

Casper Kelly: Kelly’s the delayed-action case. His Too Many Cooks—a horrifyingly surreal 11-minute Adult Swim sitcom parody from 2014—became one of the defining viral shorts of the 2010s after escaping its late-night cable slot onto YouTube and Vimeo. Twelve years later, that viral artifact is getting him his feature directorial debut. Buddy, a Sundance Midnight standout about a tyrannical children’s TV unicorn, was acquired two weeks ago by Roadside Attractions and Saban Films for a Labor Day wide release. The pipeline doesn’t always run on the four-year arc Parsons modeled; sometimes a viral artifact sits in the cultural memory for a decade before the producers that are game to back that auteur show up. (Buddy is produced by BoulderLight Pictures—the indie horror shingle that launched Zach Cregger’s career with Barbarian—alongside Low Spark Films.)

Dylan Clark: As announced just last week, this YouTube horror short director (his Portrait of God has nearly 9M views) has been tapped for the Blair Witch reboot from Lionsgate and Blumhouse at a $10M budget. Clark also has Portrait of God in development as a feature at Universal with Sam Raimi and Jordan Peele producing, and a third feature Story Time set up at LD Entertainment. Three projects, one debut feature, and the two most prestigious horror producers alive in his corner. It’s also worth flagging that Steven Schneider–the Spooky Pictures producer behind Curry Barker’s Texas Chainsaw and Sam Evenson’s Mora–is also EP on Blair Witch.

Having one of these YouTube kids directing a Blair Witch flick seems like a full-circle moment, since that film’s low-budget found-footage aesthetic and its viral internet lore-marketing were such clear influences on projects like Backrooms.

All of the above demonstrates a few takeaways about this internet-to-theaters horror trend (a trend that just before press time on May 6th was also reported on by Matthew Frank at the Ankler, with a focus on Parson, Barker, and Clark):

First, this pipeline isn’t a new phenomenon but a mature one, and between A24, the Blumhouse folks, and Spooky Pictures, there is now a substantial contingent of star horror producers who clearly have made the YouTube-to-feature handoff a primary deal-making lane. That trend is likely to continue and expand. And although A24 is still the studio leading in YouTube-to-silver-screen conversions, all the other studios are starting to get in the game.

Both comedy and horror are the pathway, but horror is always the feature destination. Why? Two structural forces are doing the work. Genre: horror is the last mass-market feature category Hollywood still makes both cheap and often—Iron Lung on $3M, Obsession shot for under $1M, Shelby Oaks on $2.8M. There is no equivalent low-budget pipeline for drama or comedy. Craft adjacency: horror and comedy are both nervous-system genres, utilizing the same skills of tension build and release that can lead either to a belly laugh or a fearful scream or both. Someone who has spent a hundred videos learning how to land a sketch beat or a jump scare on a thirteen-year-old’s algorithm-addled attention has been training the exact muscle the genre rewards.

It’s all dudes. Almost all white dudes, except for Filipino-Canadian Ian Tuason and half-Korean Markiplier. I refuse to believe there aren’t plenty of equally talented women and people of color jamming out YouTube content that demonstrates their capability to take on a small-budget horror pic. Producers and studios need to go find them.

And the final takeaway: YouTube is cracking down on AI-driven creators at its peril, risking the next generation of Kane Parsons-style innovators who use the most available technology to quickly ship their most viral ideas to a hungry audience. Yes, there are slop-meisters that need to be demonetized, but there are also budding auteurs who may never flower, or will move to flourish on another platform, if YouTube can’t calibrate its censor-bots to distinguish the two.

Noah Hawley, the Multidimensional Franchise Expander

The default mode of Peak IP is extraction. Studios hold a vault of recognizable names, and the job of each new season, sequel, or spin-off is to get more ore out of the same shaft: same story beats, same characters, same visual language, diminishing returns.

We have a decade of receipts now on what that looks like. Cinema and TV are dominated by nostalgia reboots, legacy sequels, cinematic universes that can no longer remember why they exist. And we’re about to find out what it looks like when generative AI makes the cost of extraction approach zero. Slop strip-mining of old IP, be it authorized or unauthorized, is the endpoint of the extraction model.

Which is why it’s worth naming, precisely, what Noah Hawley–the TV showrunner for FX’s Fargo, Legion, and Alien: Earth–is doing instead.

Two things recently put Hawley back in front of me. The first was his essay in The Atlantic on the consequence-free reality of the contemporary billionaire, based on his visit to Jeff Bezos’ “Campfire” retreat; it was a big part of The Internet Discourse a couple weeks ago, and also offered an interesting lens with which to view the billionaire villain in his Alien series.

More interesting to me, though, was his Canneseries interview, also at the end of April, in which Hawley repeated a label someone else hung on him recently: “franchise whisperer.”

The label is fine. But it’s not precise enough. What I see Hawley doing across Fargo, Legion, and Alien: Earth isn’t just coaxing new life out of old IP. It’s a structural method, and once you see it, you can’t unsee it.

I would label Hawley as a three-dimensional franchise expander: someone who extends other people’s properties along distinct axes, and who picks a different axis each time depending on what the source material is actually asking for.

One might ask, why focus on expanding other people’s source material at all? Hawley is a uniquely gifted writer-creator who certainly has the capacity to craft his own original stories; he’s already done so repeatedly as a novelist.

The answer is in the same Canneseries conversation, where Hawley candidly described the competitive landscape for TV. His biggest competition, he said, is YouTube: a platform that spends nothing to produce content while he’s spending $250 million per season of Alien: Earth. When the cost of content collapses to zero, recognizable brands become the lifelines that pull eyeballs back to expensive storytelling. Which is why how you build on those brands–extractive vs. additive, mining vs. growing–becomes the central craft question of the infinite-content era.

Hawley’s craft is not extracting, it’s expanding. Or, to cite an old bit of advice from comic book writers, the original shared-fictional-universe creators: when you take a toy out of the franchise toybox for your run on a comic series, you need to make sure you put it back right when you’re done–and ideally add a few new toys to the box for the next creator to play with.

Noah Hawley always creates new toys. Watch him work along three axes:

FORWARD: Fargo

The easy thing to do with the Coens’ 1996 film would have been to retell it at a more leisurely and detailed pace, or extend it by giving Minnesota detective Marge another quirky case to sleuth. Hawley did none of that. Fargo the series carries the Coens’ tones and structures forward into new contexts: new decades (1979, 2006, 1950, 2010, 2019), new casts, new crimes, new moral quandaries. Hawley essentially treats the Coens’ entire filmography as the source—Miller’s Crossing, No Country, A Serious Man, Burn After Reading—and lets that whole sensibility propagate through different American eras.

What’s striking is that Hawley is explicit about what each season is for. At Canneseries he described Season 2 as the death of the family business and the rise of corporate America; Season 3 as a deconstruction of “this is a true story” in the era of alternative facts; Season 5 as a meditation on whether the Yellowstone worldview–the idea that a man simply knows in his bones what’s right–is heroism or villainy. Underneath all of these thematic variations, he said, both Fargo the movie and Fargo the TV show have always been about the battle between decency and cynicism, and in America right now decency isn’t winning.

This is franchise expansion by translation forward to apply prior themes, tones, and tropes to new stories with new characters in different time periods. The franchise gets bigger not because more of the same thing happened, but because the sensibility proved portable, and because each iteration carries a fresh diagnostic claim about the country it’s depicting. Which, incidentally, is exactly what the Atlantic billionaire essay is doing in prose form. Hawley has a thesis about consequence and decency, and Fargo is the long-running fictional laboratory for it.

DOWN: Legion

Hawley’s approach to Legion was a very different maneuver. When faced with the opportunity to do a television show based in the expansive X-Men universe, Hawley monomaniacally drilled down into one character: David Haller, a psychically gifted, schizophrenia-diagnosed mutant from a C-list corner of the comics. The result was three seasons of unapologetically weirdo television: bizarre Bollywood dance numbers staged inside psychic battles, silent-film interludes, rap-battle telepathy, a season-long time loop, a villain living inside the protagonist’s skull. He went deep–into the character, into the psyche, into his own artistic preoccupations–and instead of just pulling more of the same from that mineshaft, Hawley excavated something uniquely his.

His Canneseries pitch for the show was “What if Breaking Bad was about Walter White becoming a supervillain? I found Professor X’s son, who’s mentally ill, basically. He has these powers but he’s not sure whether they’re real. And if he doesn’t know, then that’s the show.” He made a deeply philosophical and deeply freaky show all about the instability between what’s real and what’s in your head. You can’t do that shit with Wolverine.

Expansion through depth of specificity in exploring weird dark corners. The franchise gets bigger because one narrow slice got unimaginably deeper.

OUT: Alien: Earth

Alien: Earth—FX’s biggest streaming premiere ever, 94% on Rotten Tomatoes—is Hawley doing the opposite of Legion. Instead of drilling down on the Xenomorph, he broadens the world horizontally. The franchise has spent 45 years on one creature (the titular alien), one megacorporation (Weyland-Yutani), and one form of AI (synthetic persons). Hawley’s 2120 has five corporations (Prodigy, Weyland-Yutani, Lynch, Dynamic, Threshold), three categories of artificial person (synthetics, cyborgs, and the new hybrid synthetics with uploaded human consciousnesses), and a whole menagerie of horrific new alien species beyond the Xenomorph (blood ticks, octopus eyes, the orchid and the fly nest).

Hawley also broadened the franchise’s applicability to now by literally bringing it home to Earth, and using our current anxieties about AI, billionaires, and infectious, out-of-control biological processes against us.

This is expansion by horizontal world-thickening. The franchise gets bigger thanks to Hawley populating it with more, and more thematically rich, versions of what made us love the series in the first place. The title Alien always had a double-meaning, both a noun and an adjective. Hawley ran with the adjective and broadly applied it to new creatures, new minds, new corporate entities, new fears of the unknown.

Forward, Down, and Out

Hawley has found a way to rely on pre-existing storyworlds without exhausting them, a way to diagnose a property for what it most needs from him–and what he most needs from it–and picking a new direction. He doesn’t choose the linear-thinker path of retelling the story (the Die Hard 2 model), or extending the plot with the same characters (the Star Wars model), or telling a new adventure of the same protagonist in the same style (the Raiders model). He’s the kind of showrunner who tellingly doesn’t rewatch the originals he is adapting before he starts. He works from the emotion he remembers, then asks how to recreate it but in a completely new way.

This is why Hawley’s particular skill is so uniquely valuable in this media environment. What’s scarce in this landscape isn’t content; it’s authorial judgment about which direction to grow a property, and why. A generative model can produce infinite Fargo-flavored dialogue, infinite Legion-style freakouts, infinite Xenomorph variants. It cannot decide that this IP needs forward-translation and that one needs vertical compression and that one needs horizontal thickening. It can’t preserve and expand on the feeling of a storyworld so that the brand is still recognizable to audiences but the form it takes is novel enough to intrigue audiences. The diagnosis is the craft.

As Steven Spielberg argued at Cinemacon a few weeks ago, Hollywood needs to invest in more original IP rather than trying to squeeze every last drop out of old IP, otherwise it will creatively “run out of gas.” That’s certainly true. But if you’re going to invest in legacy IP, and want to do so in a way that is additive rather than exploitative, there’s no one better than 3D franchise expander Noah Hawley. IP extensions will necessarily continue to be a dominant form in the mediasphere as studios compete with a flood of free online content, but hopefully Hawley’s uniquely creative approach will help inspire the next generation of franchise adapters to think in more than one dimension.

Model Hypocrisy: Unconsented Data Scraping for Me But Not for Thee?

In the last issue of Converger I highlighted the raft of copyright lawsuits against AI labs currently being litigated in the US and around the world, including challenges to their use of masses of copyrighted expression scraped from the internet to train their models. The labs have consistently argued that using that data for training is defensible as a transformative “fair use” under copyright law, and the White House in its AI Action Plan made clear it agreed.

However, the White House’s Office of Science and Technology Policy (OSTP) just released on April 23rd—based on apparent pressure from the labs themselves—a saber-rattling memo that is directly and hypocritically in tension with that fair use position. Specifically, the memo condemned distillation “attacks” (i.e., automated querying of model outputs for the purpose of training new models) by Chinese AI labs against US models, after OpenAI, Anthropic, and Google DeepMind all individually complained of the same in February. The US labs variously complained about these “cyber-attacks” as “free-riding” “intellectual property theft” of “proprietary” data, and the White House followed suit:

[T]he United States government has information indicating that foreign entities, principally based in China, are engaged in deliberate, industrial-scale campaigns to distill U.S. frontier AI systems. Leveraging tens of thousands of proxy accounts to evade detection and using jailbreaking techniques to expose proprietary information, these coordinated campaigns systematically extract capabilities from American AI models, exploiting American expertise and innovation.

I am mindful that US labs are in a technical race with Chinese labs that has significant economic and national-security implications, and if the US government officially complaining about distillation helps dissuade Chinese action here (it won’t) then please, go ahead and rattle that saber. But these complaints are broadly and indeed hypocritically in tension with the positions of the AI labs and the US government in other contexts.

First and most obviously: US AI labs trained their models based on “industrial-scale” unconsented copying of other people’s copyrighted expression, and for them to argue that was fair use while claiming that training on their data is not a fair use is wholly contradictory. Indeed, there are at least four arguments why model distillation is more likely to benefit from the defense of fair use than training on indiscriminately-scraped copyrighted works, or may not even implicate copyright law at all.

One: There is a long line of cases standing for the proposition that studying outputs from a technology to reverse engineer it is a fair use, including Sega Enterprises Ltd. v. Accolade, Inc., 977 F.2d 1510 (9th Cir. 1992) (disassembling Sega Genesis game code to make compatible third-party games was fair use), Atari Games Corp. v. Nintendo of America Inc., 975 F.2d 832 (Fed. Cir. 1992) ( “reverse engineering, untainted by the purloined copy of the 10NES program and necessary to understand 10NES, is a fair use”—Atari lost on its specific facts only because it had obtained Nintendo’s source code from the Copyright Office by lying about pending litigation); and Sony Computer Entertainment, Inc. v. Connectix Corp., 203 F.3d 596 (9th Cir. 2000) (intermediate copying of Sony’s PlayStation BIOS during the development of an emulator was protected fair use, even where the resulting product directly competed with Sony’s console).

These cases together stand for the proposition that observing what a system does—including copying its outputs—to build a competing system is a fair use, particularly where the underlying functional elements are not themselves copyrightable subject matter. Notably, the underlying models themselves, which are complex functional mathematical objects created by algorithmic processes, may not be copyrightable under current law at all.

Two: At least in the opinion of the Copyright Office, generative outputs are not copyrightable for lack of a human author, meaning a fair-use defense would not even be necessary since the supposedly “proprietary” outputs are not copyright-protected. See U.S. Copyright Office, Copyright and Artificial Intelligence, Part 2: Copyrightability (Jan. 29, 2025) (Fully AI-generated outputs generated in response to a human input or prompt lack human authorship and are therefore not copyrightable).

Three: to the extent there is a copyright interest in outputs, the labs have typically assigned that interest to the users eliciting the outputs, and disclaimed their own. OpenAI’s Terms of Use provide that “you... own the Output” and “we hereby assign to you all our right, title, and interest, if any, in and to Output” (emphasis mine—the “if any” is the lab itself hedging that there may be no copyright interest there at all). Anthropic’s and Google’s consumer terms are substantially similar. The labs have done this in part for self-serving liability reasons—in the context of potential infringement claims, they want to make clear that the outputs are your creation, not theirs—but the doctrinal consequence cuts the other way: a lab that disclaims ownership of an output cannot, in the next breath, claim that output as a proprietary asset that competing researchers must not be allowed to learn from.

Four and finally: to the extent the labs argue that distillation is prohibited by their terms of service, a lack of a copyright interest in their models or their outputs would call the legality of that prohibition into question (Mark Lemley and Peter Henderson argue that contractual restrictions on the use of AI outputs may be preempted or unenforceable where there is no underlying intellectual property interest to protect). Intellectual property only provides certain limited rights, not a general ability to dictate through contract how information and expression are used. Courts are quite unlikely to consider any outputs to be trade secrets if anyone can elicit them from a model that’s been exposed to the public; if they also aren’t copyrighted, there isn’t really another relevant type of IP law to rely on.

Indeed, the weakness of any legal argument against model distillation–and its contradicting of the labs’ own arguments in other cases–is perhaps best indicated by the fact that they haven’t sued anyone over it yet. And notably, the White House too fails to articulate any reason why these “attacks” are illegal.

Beyond not making sense as a matter of IP law, the White House’s position also contradicts its AI Action Plan’s dedication to fostering the growth of open-source AI models as a source of competitive innovation that isn’t centralized in the hands of a few large AI labs. Both Dean Ball—the AI Action Plan’s primary staff drafter while at OSTP—and open-source AI researcher Nathan Lambert of the Allen Institute for AI (Ai2) have discussed at length on Twitter how distillation is a common technique, including amongst academics, for testing and improving the AI state of the art and developing open-source models including smaller models that can run locally. That open-source ecosystem benefits all AI advancement, not just in China but here and around the world.

Nor is the use of distillation limited to the open ecosystem: for example, Elon Musk admitted on the stand last Thursday in his lawsuit against OpenAI that his company xAI has “partly” used distilled outputs from OpenAI models to help train Grok, calling it standard industry practice. The other labs have likely done the same to each other at times. As expressed by Clem Delangue, the CEO of open-source AI platform Hugging Face, all of this vibes a bit like the labs “pulling the ladder” up behind them:

All labs trained their models by distilling (at the very least distilling the web) which allowed them to become the fastest growing businesses in the history of humanity and now that they have armies of lawyers and lobbyists, they are trying to prevent others from doing the same thing.

For all these reasons, the complaints from the major labs and the US government about distillation by foreign competitors ring hollow. There is also the question, raised by Dean Ball, of whether the US government even “actually adds value in practice on the issue of combatting distillation, and whether that addition of value is larger than the risks associated with the USG paying close attention to the minutiae of frontier AI development.” This question is all the more salient now that the White House is reportedly considering prior restraints on the release of frontier models by US labs, a particularly heavy-handed and constitutionally dubious intervention in the name of security.

Perhaps, if the labs want to combat distillation more aggressively, they should simply invest more money in their own technical countermeasures and leave the rest of us out of it. Or they can sue someone, if they don’t think it will completely undermine their own fair use defenses in the scraping cases against them. But I wouldn’t hold my breath.

A Hollywood AI Pipeline Built on Chinese Models?

Following up on my piece in the first issue about the post-Sora video gen landscape, fresh news has validated my take: Netflix is investing heavily in both open and proprietary AI video gen tools, and traditional studios that don’t build their own tech stack will soon be at a distinct disadvantage.

Netflix—along with its subsidiary Eyeline Labs—followed up last month’s open-source release of the VOID model by dropping Vista4D, a “video reshooting framework” on GitHub. The tool takes previously shot video input and lets you change camera angle, add or modify camera movement, or dynamically expand the scene.

Like both VOID and the InterPositive tools that Netflix acquired from Ben Affleck, these new tools are focused on modifying already-shot footage rather than generating video from scratch with text prompts. Unlike those other tools, though, this one is built on top of Wan2.1-T2V-14B, a 14-billion-parameter text-to-video diffusion model open-sourced by Chinese tech giant Alibaba.

Netflix building on top of a Chinese video model is emblematic of a post-Sora technology trend identified last week by The Wall Street Journal: China is currently whooping American labs on the video generation front, both in adoption of open models like Wan and proprietary models like Kuaishou’s Kling—even as some Western labs (per TechCrunch) retreat from consumer video generation entirely.

As the WSJ reports, Chinese labs took seven of the top 10 spots for video-generation models last month on rating platform Artificial Analysis, including the widely popular (and in Hollywood, reviled) Seedance 2.0 from Bytedance and the new benchmark-smashing HappyHorse model from Alibaba.

And in the Ankler, Erik Barmack sketches a three-vertical landscape: social generation, led by xAI’s Grok; professional short-form video, led by Kling; and Runway for Hollywood pre- and post-production. Then again, Kling is popular in Hollywood too: as the WSJ points out, it’s Kuaishou’s model that was used by House of David‘s producers for their virtual backlot, and presumably that’s what they’re using for their upcoming Moses series (discussed in our last issue).

And Asia’s not just leading on video models. While Hollywood debates whether AI belongs on a film set at all, Asian producers are racing ahead to integrate it into the production pipeline itself–slashing costs and compressing timelines on both feature films and microdramas.

In China, The Economist reports that AI-animation tools have cut microdrama production costs by up to 90%, with live-action microdrama production dropping 80% in some regions; The New York Times similarly reports on how AI is both fueling and disrupting the Chinese microdrama space.

In India, The Hollywood Reporter detailed last week what it called “the world’s most consequential live experiment in AI filmmaking”: roughly 80% of Indian films now use AI extensively in pre-visualization, AI-driven dubbing is rolling out at platform scale across a dozen languages, and Mumbai studios are producing AI-native features in six to twelve months versus the two-to-three years for traditional animation.

These two trends are converging faster than anyone expected: the center of gravity in video generation is moving to Chinese models, and the center of gravity in AI-integrated production is moving to Asia more broadly. Netflix is the one American studio racing ahead. The question is which other studios—or American AI labs—are even in the race.

How Not To Integrate AI Into Newsrooms: McClatchy’s AI-Driven Byline Blues and OpenAI’s Slop News Site

Moving from the artist’s studio to the newsroom: last issue we talked about the controversy over when and how to integrate AI into reporting, with some journalists choosing to leverage AI tools and others resisting. Since then we’ve seen a couple new lessons in how not to use AI in newsrooms.

First lesson is from media company McClatchy, operator of thirty newspapers across the country, which as first reported in multiple stories by The Wrap recently introduced a new AI-driven “Content Scaling Agent” into all its newsrooms. “More stories, more inventory” was management’s hope and demand as they launched the tool to reporters with a groan-worthy Star Wars-style intro crawl.

It would have been controversial enough just for McClatchy to try to force reporters to use AI to rewrite and repackage their original stories for “new audiences, angles and entry points.” But as also reported in the The New York Times last week, McClatchy truly stirred up the hornet’s nest when it said that it would use reporters’ bylines on AI-written stories adapted from their work even against their objections, in order to help get better Google rankings. “If they don’t have the ability in their contract to remove their byline, we’re going to use their name,” said management, leading to complaints from several of the newsrooms’ unions and a joint letter campaign by writers to withhold their bylines.

As Ariane Lange, an investigative reporter at the Sacramento Bee and the vice chair of its union, told the Columbia Journalism Review,

I’ve covered traffic deaths in the city of Sacramento since 2024, and I have talked to many families of people who have been killed in crashes, and that’s a very vulnerable moment. I’m assuring them they can trust me, but I also have to explain that my employer might feed their story to a chatbot and spit it back out as five key takeaways. That’s revolting to me.

In an equally revolting development first reported by Model Republic, a PR firm working for the AI industry was revealed in late April to have been running a completely fake AI-driven news site with stories attacking AI regulation and the industry’s critics. The discovery came when one of those critics received a fishy inquiry from a purported journalist from the site, The Wire by Acutus, that when run through the AI detector Pangram was ID’d as 100% AI generated.

On further investigation it appeared The Wire didn’t have any human journalists at all, and that it was linked to PR firm Novus working for the anti-AI-regulation super PAC Leading the Future that’s funded by OpenAI’s president Greg Brockman and venture firm a16z. When news of the bot-driven slopaganda site leaked, the PAC essentially confirmed it was created by their PR firm but without their knowledge, and said they were cutting those ties. Paired with recent news of the same PAC funding anti-AI-regulation astroturf campaigns amongst TikTok creators, this story certainly paints an unsavory picture of that PAC, their backers, and their tactics.

Thankfully, AI efforts like McClatchy’s that disempower rather than support journalists are receiving sustained pushback from newsroom unions in their negotiations, from the New York Times to ProPublica to CBS News. No news yet, however, on whether the Wire’s robot reporters are also organizing to improve their own working conditions.

Hannah Einbinder Swirlie Watch: Who’s Getting Flushed for Using AI This Week?

Last issue we highlighted how Hacks star comedian Hannah Einbinder went off on AI creators, calling them losers and saying she wanted to stick their heads in a toilet and flush (what any high-school bully knows as a “swirlie”). In the past few weeks, several new media figures have risked Hannah’s ire, and more will certainly volunteer for swirlies in the coming months, so I figure that Swirlie Watch will become a semi-regular feature here.

Who should be on guard against Hannah’s swirlie vengeance this week?

Director Alex Proyas (of The Crow and I, Robot) announced that he’d be working with AI studio Ex Machina to generate his next feature, a digital-afterlife sci-fi satire called Heaven.

Writer-Director Roger Avary (co-writer and producer of Pulp Fiction), also in partnership with Ex Machina, announced he’ll be adapting Milton’s Paradise Lost using generative AI.

Director Shawn Levy (Deadpool & Wolverine, the upcoming Star Wars: Starfighter) says he hasn’t “meaningfully” used gen AI yet in his own storytelling–so maybe he just gets toilet-dunked rather than fully swirled?–“but I have no doubt that in the course of my career we will see its integration… I think it’s going to be essential.” Nope, that’s a full swirlie right there.

Actor-Producer Reese Witherspoon doubled-down on her comments urging women to learn AI, while making clear “I don’t believe computers should replace humanity” (phew, you had us worried there!).

Director Steven Soderbergh tripled-down on his comments that we reported on last issue with a much more fulsome explanation of the AI use in his upcoming John Lennon and Yoko Ono documentary, in partnership with Meta, defending that choice while also being fully transparent about it.

Producer Ryan Kavanaugh had the temerity to defend the virtually-produced Doug Liman thriller, Bitcoin: Killing Satoshi, raising the same possibility I articulated last issue: that virtual production might actually be a way to bring more movie productions and more industry jobs back to LA.

DJ and music producer Diplo said in a podcast interview and then reiterated on Twitter that “there’s no fighting AI” and “if you are a creative you need to adapt or just like give up and become an uber driver until everyone has a waymo.” That definitely warrants multiple flushes.

French electronic music pioneer Jean Michel-Jarre complained of the conservatism of creative industries “freaking out” about AI, predicting that artists would use AI “to create the cinema of tomorrow, the hip-hop of tomorrow, the techno of tomorrow, the rock’n’roll of tomorrow.” He says “[w]e should never be afraid of technology.” But he should be afraid of Hannah Einbinder.

OpenAI CEO Sam Altman pushed back on Hollywood concerns about AI replacing humans with some unconvincing pro-human pablum: “I think people really care about other people…. I think people really care about the human beings behind the stories and the art and the creative work that matters so much, so my instinct is it’s going to go the other way and people will care more about humans and more about human creators in the future, not less.” Problem solved!

NYU Film School’s administration and faculty earned a collective swirlie by partnering with Runway to put AI credits and training in the hands of its students for free (well, plus NYU’s exorbitant tuition). Looks like USC’s film school and the Sundance Institute may also have earned a dunking for getting in bed with Adobe and Google.

Get to work on those swirlies, Hannah! You have a long target list this week. Everyone else, be on guard if entering a public bathroom in Hollywood or NYC.

FRAGMENTS

Creator, Trademark Thyself

Taylor Swift is following Matthew McConaughey’s lead and filing to trademark her voice and image in an attempt to help combat misuse of both by AI. The actor’s advice: “So I say: Own yourself. Voice, likeness, et cetera. Trademark it. Whatever you gotta do, so when [AI] comes, no one can steal you.” Well alright alright alright.

ChatGPT Images 2.0 Can Now Fake Your Doctor’s Note

The new image model is, as highlighted by TechCrunch, surprisingly good at rendering coherent and readable text outputs, leading to all kinds of novel use cases popping up on Twitter.

Affleck Tops List of Most Powerful AI Players in Hollywood

The Hollywood Reporter published its list of the 25 most powerful people shaping the future of AI in Hollywood, and at the top was Ben Affleck whose AI startup sale to Netflix has helped prompt a vibe shift in the creative world around using AI tools in filmmaking. Or, as one commentator put it to Vanity Fair: having a prominent creator like Ben Affleck pushing AI “helps get filmmakers to feel more comfortable [using it]—and that’s worth the $600 million” that Netflix paid him. For my part I’m very supportive of the creative use of AI but “he got paid hundreds of millions to use his celebrity to normalize AI” seems a bit gross, and as reported in the last issue, Affleck and Netflix also haven’t been very forthcoming about the technology behind the startup’s product nor about the copyrighted works behind that technology. But being the most powerful isn’t always pretty!

New Creative AI Integrations, Integrating AI Creatively

The last couple weeks saw some big releases in the realm of integrating creative tools into chatbots and chatbots into creative tools.

On April 23rd, a16z and Union Square Ventures-backed startup Glif came out of beta with the release of Glif v2, pitched as “it’s like Claude Code for AI videos”: a “creative super agent” chatbot interface that integrates with virtually every available video and audio gen model for a unified creative dashboard.

Five days later the makers of actual Claude announced Anthropic’s own integrated creative offering with a raft of new connectors between Claude and a wide range of creative applications: the entire Adobe Cloud suite of tools, Blender, Canva’s Affinity, Ableton, Splice, SketchUp, and Resolume. This is a clear warning to startups like Glif: when building wrapper interfaces around other people’s models and services, you risk the model-makers themselves offering the same features directly.

And, in a story I missed before putting out the previous issue, Google and Avid announced earlier in April the integration of Gemini AI features into the Avid editing suite, a godsend for editors trying to locate just the right shot in a heaping digital pile of other takes. Harried editors can now just describe in chat the particular visual movements, dialogue or emotional cues they’re looking for and Gemini will help surface the clip they need.

Regardless of whether you’re using AI to generate art or just tweak or edit it, and whether you’re using a startup’s toolkit or subscribing to a foundation lab’s frontier model, creative AI is getting exponentially easier to use by the day, and the trend will likely continue to be toward one creative interface to rule them all.

Because Parasocial Relationships with AI Aren’t Weird Enough

The dominant player in vertical comics, Webtoon, has inked a deal with AI avatar tech company Genies to let users chat with their favorite comic characters. Still unclear what the content guardrails or age restrictions around that tech might be but worth noting that Disney has a deal with Webtoon to build Marvel’s new digital comic platform, so we may end up seeing this tech applied to many more popular characters than just those in your favorite manga.

Because Parasocial Relationships with AI Aren’t Weird Enough, Part Two

The New Yorker published a fascinating and disturbing article about TikTok and Instagram influencers using AI to pose as different races and genders; as the author put it on Twitter, “it gets weird real fast.” See also this related Wired story onfake MAGA girl influencers, and this creepy demo video I found on Twitter of a college dude using AI to pose as a bikini blonde. As the article retweeted by that poster noted, you now never know whether the influencer you’re watching is made out of four files on a laptop somewhere.

The Other Ryan Gosling Astronaut Movie

I really enjoyed Project Hail Mary—go see it on a big screen before it’s gone!—which reminded me of another (tragically underappreciated) movie starring Ryan Gosling as an astronaut: Damien Chazelle’s Neil Armstrong biopic First Man. That earlier movie inspired me several years ago to write an Atlantic article about the feedback loop between movies and science, using the example of how the orbital mechanics of the Apollo missions were presaged decades prior in Fritz Lang’s 1929 silent film Woman in the Moon, informed by Hermann Oberth, the father of German rocketry and the first movie science consultant. (You can read several more of my articles about the sci-fi feedback loop over at Slate).

Warner Bros. Shareholders Approve Paramount-Skydance Merger Bid

Former FTC commissioner Alvaro Bedoya briefly highlights ways it might still be stopped; Wade Major of Hollywood Heretic dives deep with nearly 15,000 words on why he thinks the Netflix takeover was always doomed and the Paramount merger will be better for Hollywood. I’d still prefer no merger at all but it’s a fascinating piece.

Window Treatment: (Almost) All Studios To Let Movies Stay in Theaters Longer

Ever since the pandemic, studios have been aggressively shortening theatrical windows for their movies in a short-sighted attempt to shore up their streaming operations. But it looks like the windowing wars may have reached a cease fire as the studios are finally listening to exhibitors, and seeing more clearly how this self-cannibalization has been leaving theatrical money on the table. Based on a slew of recent announcements, longer windows appear to be the “new gospel” in Hollywood: Last Friday Netflix announced Greta Gerwig’s Narnia: The Magician’s Nephew will get a true wide release in February 2027 with a 49-day exclusive run—Netflix’s first real theatrical window after Ted Sarandos pledged 45 days under oath to a Senate antitrust subcommittee in February. It caps a month that saw every major reaffirm a 45-day floor at CinemaCon: Sony’s Tom Rothman told exhibitors to enforce it, Paramount’s David Ellison made a surprise appearance to commit to it effective immediately, and Disney reiterated its 57-day average. Apple’s the only studio that hasn’t yet committed to a longer window, but I’m guessing its eventual F1 sequel will be in theaters for a long, long time.

Proving (or Pretending) You Didn’t Use AI for Your Writing

Following up on her New York Times editorial on the AI-driven erosion of trust between authors and readers, novelist Andrea Bartz wrote an in-depth Substack article on the challenges of proving that you didn’t use AI in your writing, ultimately concluding that the best way to fend off accusations for now is to thoroughly document your writing process. (Notably, Bartz is the lead class representative in Bartz v. Anthropic, which along with Kadrey v. Meta is the flagship litigation against the tech giants for training their AI models on her and other authors’ novels without permission. Another lawsuit against Meta–and Mark Zuckerberg personally–by novelist Scott Turow and five publishers just dropped this week.)

Meanwhile, there’s a fun new AI tool for those who want to humanify their emails, from Dorm Room Fund tech investor and tinkerer Ben Horwitz: Sinceerly.com. Horwitz calls it the anti-Grammarly, and it uses AI to mess up your email drafts to make them seem more like something a person would write. There are three settings: the minimal-changes Subtle setting, the intermediate Human setting, and–with maximum typos and abruptness and minimal grammar compliance–the CEO setting 😂

The Actors Guild Deal Has…Some Sort of AI Protections?

Last issue I bemoaned the lack of new protections against AI replacement for members of the Writers Guild of America in their fresh four-year deal with the studios, and pointed to the upcoming SAG-AFTRA negotiations as another opportunity for Hollywood’s creative class to secure AI concessions. Well, now that actors’ union has also signed a four-year deal with the studios and it has…some sort of AI “guardrail” provisions? Or “safeguards” or “protections”? But no one reporting on the deal seems to have any details on what these protections are. So, um, hopefully they’re good?

AI Film Wins at a Traditional Film Festival for First Time; Academy Nixes Awards for AI Writing and Performances

Depending on your perspective this is either a travesty or an auspicious first, but: in mid-April, Memory of Princess Mumbi–a feature film from Swiss-Kenyan director Damien Hauser that substantially used AI to generate its futuristic sci-fi background–won the top prize at the Istanbul Film Festival. As Hauser discussed before winning the award, his portrait of a retro-futuristic Africa in the year 2093 would not have been possible without AI, even as he set out to “make a movie that AI [alone] could never make.”

On the other side of the world, the Academy Awards organization released on Friday new rules making clear that AI-generated performers are not eligible for acting awards (so, e.g., no award for AI-resurrected Val Kilmer), and only human-authored scripts are eligible for screenwriting awards. The rules notably did not address other uses of AI in regard to other awards–so, e.g., something like Memory of Princess Mumbi could still be considered for Best Foreign Film.

44% Slop and Rising: AI-Generated Music on Deezer

Late last month music platform Deezer (which I admittedly had never heard of before this story) disclosed that 44% of daily uploads–roughly 75,000 tracks a day, over 2 million a month–are AI-generated. But consistent with last issue’s piece on how older music is resonating with streaming listeners much more than new music is, whether AI-generated or not, the AI music on Deezer represents only 1–3% of streams. And 85% of those streams are flagged as fraudulent and demonetized. The company is now licensing its AI detector to other companies.

And that’s what’s converging this week! See you next time, and don’t forget to share if you liked what you read.